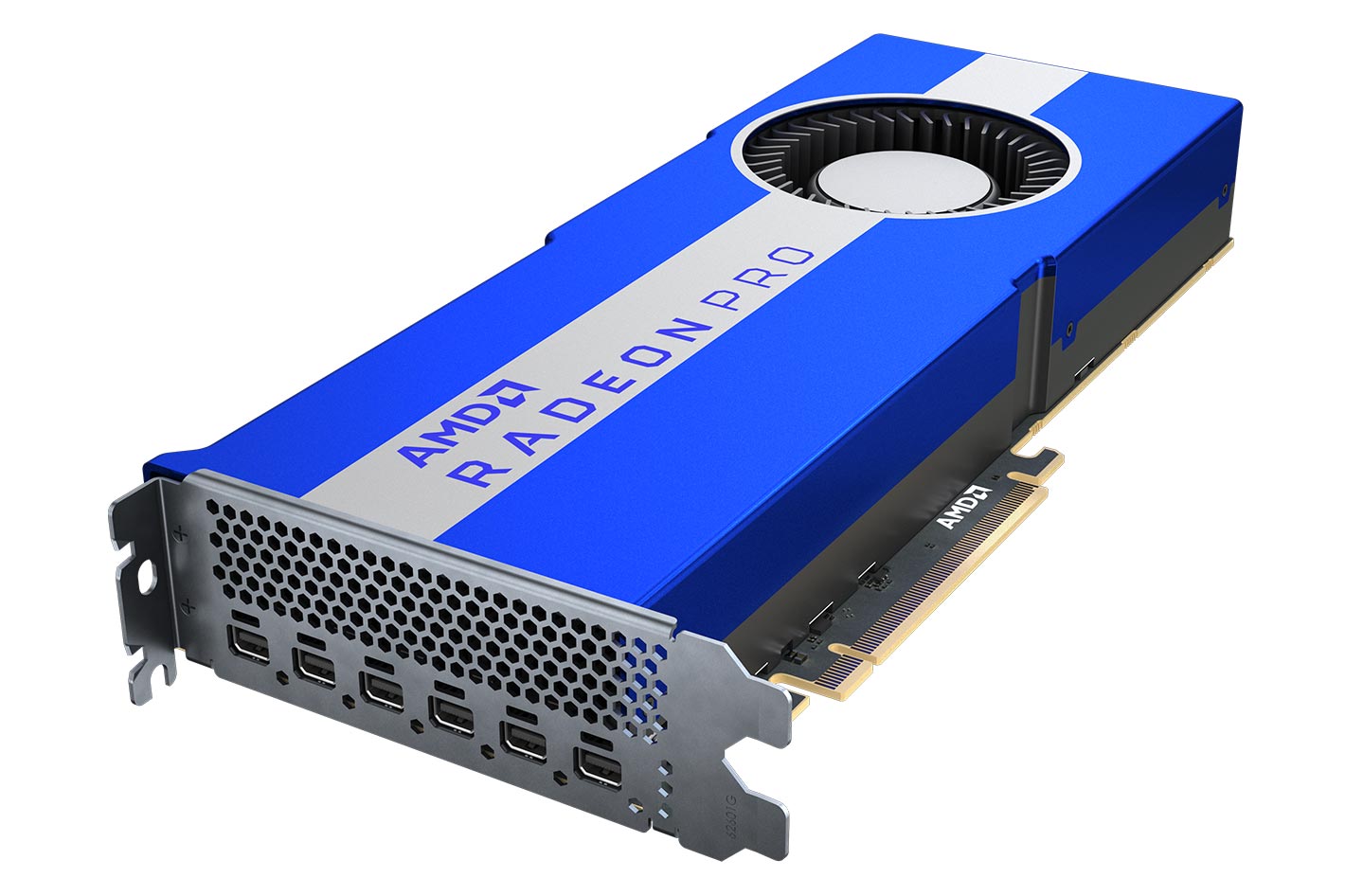

We support automatic code generation without having to recraft GPU kernels for each and bring support to all these ways. While there are so many possible ways, few ML software solutions that build for solutions other than CUDA, largely due to the engineering cost to replicate a stack for a new hardware or GPU WebGPU is the latest web standard that allows the computation to run on web browsers. Vulkan is the latest graphics standard and offers the widest range of support across GPU devices. ROCm stack is what AMD recently push for and has a lot of the correspondingīuilding blocks similar to the CUDA stack. There are several possible ways to support AMD GPU: ROCm, OpenCL, Vulkan, and WebGPU. At a high level, the framework lets the user take open language models and compiles it with Python-based workflow, including APIs to transform computational graphs, optimize the layout and scheduling of GPU kernels, and deploys it natively on platforms of interest. MLC-LLM brings state-of-the-art performance for a wide variety of backends, including CUDA, Metal, ROCm, Vulkan, and OpenCL, spanning both server-class GPUs to mobile (iPhone and Android). MLC-LLM builds on top of Apache TVM Unity, a machine-learning compilation stack that offers productive Python-first development and universal deployment. Here we leverage MLC-LLM, an ML compilation-based solution that offers high-performance universal deployment for LLMs. Instead of crafting specific kernels for each individual backend like ROCm or CUDA, an MLC solution automatically generate code for different backends. Machine learning compilation is an emerging technology that compiles and automates the optimization of machine learning workloads. What is machine learning compilation (MLC). In this post, we are taking a deep look at how well AMD GPUs can do compared to a performant CUDA solution on NVIDIA GPUs as of now. More universal software support across backends. Emerging technologies like machine learning compilation helps to reduce overall cost of.AMD is trying to catch up with investments in the ROCm stack.There are two factors in the ecosystem that starts to bring changes to the picture: The main gaps were due to a lack of software support and optimizations for the relevant models. Hardware is not necessarily the reason why AMD lagged in the past. We put it here as a reference point to provide more information.Īt a high-level, we can find that AMD 7900 XTX is comparable to RTX 3090 Ti from the hardware spec perspective. It is harder to compare the price of 3090Ti as that was a previous generation. RX 7900 XTX is 40% cheaper than RTX 4090.Lantency sensitive LLM inference is mostly memory bound, so the FP16 performance is not a bottleneck here. 4090 has 2x more FP16 performance than 7900 XTX, while 3090 Ti has 1.3x more FP16 performance than 7900 XTX.All have 24GB memory, which means they can fit models of the same size.AMD is one potential candidate.įrom the spec comparison, we can see that AMD’s RX 7900 XTX is a good match for NVIDIA’s RTX 4090 and RTX 3090 Ti. Support to a broader class of hardware accelerators. In the meantime, with the high demand for compute availability, it is useful to bring Most of the performant inference solutions are based on CUDA and optimized for NVIDIA GPUs. There have been many LLM inference solutions since the bloom of open-source LLMs. Besides ROCm, our Vulkan support allows us to generalize LLM deployment to other AMD devices, for example, a SteamDeck with an AMD APU. More specifically, AMD Radeon™ RX 7900 XTX gives 80% of the speed of NVIDIA® GeForce RTX™ 4090 and 94% of the speed of NVIDIA® GeForce RTX™ 3090Ti for Llama2-7B/13B. MLC-LLM makes it possible to compile LLMs and deploy them on AMD GPUs using ROCm with competitive performance.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed